Do not index

Original Paper

Blog URL

Introduction

In this blog post, we'll explore how to use the

ConversationCoherence evaluator from Athina to assess the coherence of conversations generated by language models. This tool is particularly useful for developers working with language models to ensure that the dialogues produced by their AI are logical and contextually appropriate.What is it?

The

ConversationCoherence evaluator is a tool designed to check the coherence of conversations by an AI. It evaluates whether each response in a conversation logically follows from the previous messages, ensuring that the AI maintains context and relevance throughout the interaction.Why do you need it?

For developers of language models, particularly those used in chatbots or virtual assistants, ensuring conversation coherence is crucial. Incoherent responses can confuse users, lead to poor user experience, and diminish trust in the AI system. By using this evaluator, developers can identify and rectify incoherence in conversations before deploying their models in real-world applications.

How it works

The evaluator processes a batch of conversations, where each conversation is a sequence of alternating messages between a user and an AI. It assesses each message in the context of the preceding dialogue to determine if the AI's responses are coherent. The results include a coherence score for each conversation, along with specific feedback on any incoherence found.

Tutorial

Let's walk through how to set up and use the

ConversationCoherence evaluator using a Python notebook.Step 1: Import Necessary Libraries and Set API Key

First, you need to import the required libraries and set your OpenAI API key. This key will authenticate your requests to the language model.

import os

from athina.keys import OpenAiApiKey

OpenAiApiKey.set_key(os.getenv('OPENAI_API_KEY'))

Make sure you have your

OPENAI_API_KEY stored in your environment variables for this to work.Step 2: Prepare Your Conversations Data

Prepare the data by creating a list of dictionaries, where each dictionary represents a conversation. Each conversation contains a list of messages exchanged between the user and the AI.

The messages should be strings, where the user’s message starts with “User: “ and the AI’s response starts with “AI: “

conversations = [

{

"messages": [

"User: I'd like to buy a smartphone.",

"AI: What kind of smartphone?",

"User: An iPhone 14 Pro",

"AI: How much storage do you need?",

"User: 256GB",

"AI: What color?",

"User: White",

"AI: Sounds good - I've loaded the item into your cart."

]

},

{

"messages": [

"User: I'd like to buy a smartphone?",

"AI: Sure, I can help with that. Where do you live?",

"User: SF",

"AI: Are you looking for rental apartments in SF?"

]

}

]

Step 3: Run the Evaluator

Use the

ConversationCoherence class to evaluate the coherence of each conversation. Convert the results to a DataFrame for easy viewing.from athina.evals import ConversationCoherence

result_df = ConversationCoherence().run_batch(data=conversations).to_df()

print(result_df)

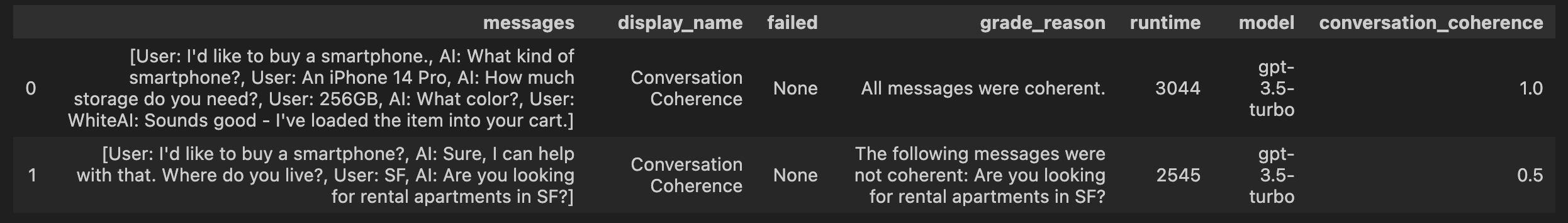

This will output a DataFrame showing the coherence evaluation for each conversation, including whether any messages failed the coherence check and reasons for any failures.

For example, in the Dataframe above you can see the second conversation had a low coherence score of 0.5 because 1/2 AI messages in the conversation were coherent.

By following these steps, you can effectively use the

ConversationCoherence evaluator to enhance the quality of conversations generated by your language models. For further assistance, feel free to reach out to us at hello@athina.ai or via the chat on https://athina.ai.