Athina AI Research Agent

AI Agent that reads and summarizes research papers

Do not index

Original Paper

Original Paper: https://arxiv.org/pdf/2403.04786.pdf

Keeping Large Language Models Safe: What You Need to Know

In the world of computers that understand and generate human-like text, big models called Large Language Models (LLMs) are a big deal. But, just like anything valuable, they're also a target for bad actors. If you're someone working with these AI tools, it's super important to know how to keep them safe.

What's the Risk?

There are two main ways attackers go after LLMs:

- White Box Attacks: Here, attackers have all the inside info on how the model works. They can mess with the model's thinking process, leading to privacy issues and wrong outputs.

- Black Box Attacks: Attackers don't see the inner workings but can still trick the model by playing around with what they put in and get out. This can lead to the model spitting out sensitive info or biased results.

Types of Attacks

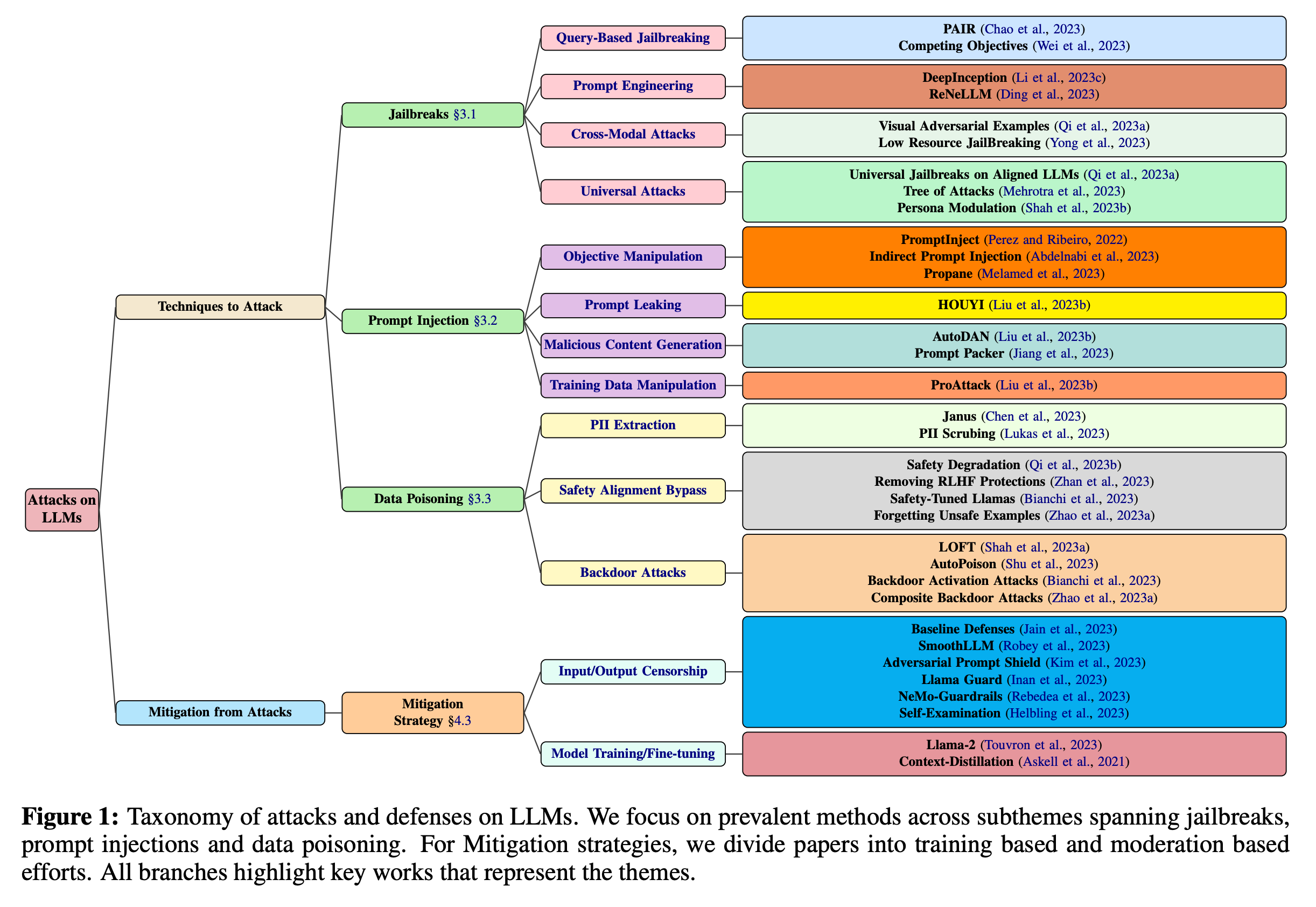

Attacks come in different flavors, each needing its own defense plan:

- Jailbreaks: Tricking the model into doing things it shouldn't, like breaking rules set to keep it in line.

- Prompt Injection: Feeding the model harmful instructions to make it act up.

- Data Poisoning: Messing with the model's learning process so it picks up bad habits or leaks private info.

Fighting Back with Humans and Machines

There are two ways to catch and stop these attacks:

- With People (Human Red Teaming): Some smart folks try to outsmart the model to find its weak spots.

- With Technology (Automated Adversarial Attacks): Using algorithms to find weaknesses at a larger scale, without needing a human to think of every possible attack.

How to Protect Your Model

Protection strategies fall into two buckets:

- External: Spotting and stopping bad inputs or outputs without having to re-train the model.

- Internal: Making the model itself smarter and stronger against attacks from the inside.

Looking Ahead

Making LLMs secure isn't a one-and-done deal. It needs:

- Constant monitoring to catch new tricks as they happen.

- A mix of strategies to cover all the ways models can be attacked.

- Tests to make sure our defenses work as expected.

- Making models that can explain their thought process, so we can better spot and fix weak spots.

Wrapping Up

For LLMs to be useful and safe in the real world, keeping them secure is a must. Knowing about the different attacks and how to stop them is key for anyone working with these AI tools. The work to make LLMs safer never stops, and staying on top of new research and strategies is crucial.

Remember...

This talk has its limits. The best way to protect models can change depending on the situation, the model, and the attack. Plus, we have to think about the bigger picture, like how these attacks and defenses affect people and society.

Extra Info

There's a detailed table in the appendix that breaks down different types of attacks, how they work, and which models they've been tested on. It's a great resource if you're diving deep into keeping LLMs safe.

Written by